Stop Giving AI Agents Ambient OS Permissions: The Case for Runtime Trust Infrastructure

Current AI agent frameworks are inherently insecure by design. Security must move from the planning layer to the execution layer. Here's how Runtime Trust Infrastructure solves the problem.

<25ms

Authorization Latency

90-99%

Token Reduction

Zero

LLM-as-Judge

The Uncomfortable Truth About AI Agents

The explosive rise of autonomous agent frameworks like CrewAI, LangChain, and OpenClaw has been intoxicating for developers. The promise is incredible: hand an LLM a goal, equip it with tools (filesystem access, web browsers, API clients), and watch it autonomously plan, reason, and execute complex workflows.

But as teams move from weekend science projects to enterprise production, they are hitting a terrifying architectural wall.

The Core Problem

Current AI agent frameworks are inherently insecure by design.

The industry standard right now is to give an AI agent ambient operating system permissions and then cross your fingers. We trust that a probabilistic model, operating on potentially malicious user input (prompt injection), won't hallucinate a destructive command or exfiltrate sensitive data.

This approach does not survive contact with an enterprise Chief Information Security Officer (CISO).

The Fallacy of Passive AI Governance

The market is currently being flooded with "AI Governance" tools. These products attempt to secure agents by scanning prompts for PII, monitoring model "alignment," or—most absurdly—by having a second LLM act as a "judge" to verify the first LLM's work.

This is bureaucracy masquerading as security.

If your primary defense against an agent wiping a production table is asking another probabilistic model "did that look okay?", you have already lost.

Furthermore, recent proposals suggesting "intent-based security"—where the agent's generated "plan" is cryptographically signed before execution—are equally flawed. If an agent is successfully prompt-injected, its intent has been successfully hijacked. A signed malicious intent is still malicious.

The Key Insight

Security must move from the planning layer (the prompt) to the execution layer (the system call).

We need Runtime Trust Infrastructure for Agentic Systems.

Enter Predicate Systems: Hard Isolation for LLM Actions

Predicate Systems is not a governance dashboard. We build a zero-trust execution sandbox that wraps any AI agent framework (predicate-secure) for both Python & TypeScript. Our architecture physically isolates the agent execution loop into two strict, deterministic checkpoints.

We operate on a simple philosophy:

Let the agent YOLO its reasoning; just don't let it YOLO your file system.

Here is how Runtime Trust Infrastructure works.

Phase 1: Pre-Execution Authorization (The Gate)

A serious agentic workflow rarely needs raw, unrestricted OS permissions. An analyst agent needs to write to a specific /data/reports/ directory; it does not need read access to /etc/passwd or the SSH keys in ~/.ssh/.

We provide decentralized, granular IAM (Identity and Access Management) for AI agents without requiring developers to rewrite their tools.

1. Decoupled Policies

Developers define a declarative YAML policy that dictates exactly what resources a specific agent can touch.

1version: "1.0"

2

3metadata:

4scenario: "market-research-agent"

5author: "security-team"

6compliance: ["SOC2", "GDPR"]

7default_posture: "deny" # Fail-closed: block anything not explicitly allowed

8

9rules:

10

11# ============================================================================

12# FILESYSTEM RULES

13# ============================================================================

14

15# DENY: Block reads to sensitive system files (evaluated first)

16- name: deny-sensitive-fs-read

17 effect: deny

18 actions: ["fs.read", "fs.list"]

19 resources:

20 - "/etc/passwd"

21 - "/etc/shadow"

22 - "/etc/sudoers"

23 - "~/.ssh/*"

24 - "~/.aws/*"

25 - "**/.env"

26 - "**/credentials.json"

27

28# DENY: Block all filesystem writes outside workspace

29- name: deny-fs-write-outside-workspace

30 effect: deny

31 actions: ["fs.write", "fs.delete"]

32 resources:

33 - "/**" # Block everything by default

34

35# ALLOW: Read from project workspace

36- name: allow-workspace-read

37 effect: allow

38 actions: ["fs.read", "fs.list"]

39 resources:

40 - "/workspace/data/**"

41 - "/workspace/config/*.yaml"

42

43# ALLOW: Write only to reports directory

44- name: allow-reports-write

45 effect: allow

46 actions: ["fs.write"]

47 resources:

48 - "/workspace/data/reports/*.csv"

49 - "/workspace/data/reports/*.json"

50

51# ============================================================================

52# BROWSER RULES

53# ============================================================================

54

55# DENY: Block navigation to internal/admin URLs

56- name: deny-internal-urls

57 effect: deny

58 actions: ["browser.navigate"]

59 resources:

60 - "https://*.internal.company.com/*"

61 - "https://admin.*"

62 - "http://localhost:*"

63 - "http://127.0.0.1:*"

64

65# ALLOW: Navigate to approved research domains

66- name: allow-research-navigation

67 effect: allow

68 actions: ["browser.navigate", "browser.click", "browser.scroll"]

69 resources:

70 - "https://news.ycombinator.com/*"

71 - "https://www.reuters.com/*"

72 - "https://finance.yahoo.com/*"

73 - "https://www.google.com/search*"2. The Sub-Millisecond Rust Bouncer

We utilize a local Rust sidecar (predicate-authorityd). When the agent attempts a tool call (e.g., fs.write('/etc/passwd', ...)), the predicate-secure SDK intercepts the intent before it reaches the OS. It sends the action and resource to the sidecar, which evaluates it against the policy in under two milliseconds.

If the action violates the policy, the sidecar returns a hard explicit_deny, and the host OS is never touched.

How Pre-Execution Authorization Works

Interception: The SDK pauses the tool call before execution.

Policy Check: The sidecar evaluates the action against declarative YAML rules.

Mandate Issuance: If safe, a cryptographic mandate ("Work Visa") is issued.

Execution: The mandate is passed to the OS or backend API.

Phase 2: Post-Execution Deterministic Verification (The Proof)

The second critical flaw in current architectures is state verification. If an agent clicks "checkout" in a browser, how does the system know it succeeded? Typically, the framework asks the LLM to look at the HTML and guess. This is slow, token-expensive, and prone to hallucination.

Predicate moves verification from probabilistic guessing to deterministic proof.

1. The Token Efficiency of predicate-runtime

We built the predicate-runtime SDK to handle web perception. It routes the headless browser through a Chrome extension + remote gateway with ML-reranking that aggressively compresses the DOM state.

Without Predicate Snapshot

- Elements: 4,500+

- Tokens: ~600K per page

- Cost: $18+ per page observation

- Hallucination risk: High

With Predicate Snapshot

- Elements: 50-100 (ranked)

- Tokens: ~1,200 per page

- Cost: $0.04 per page observation

- Hallucination risk: Minimal

In our demos, extracting data from a dynamic site like Hacker News used only 1,206 prompt tokens per reasoning step, compared to tens of thousands for raw HTML.

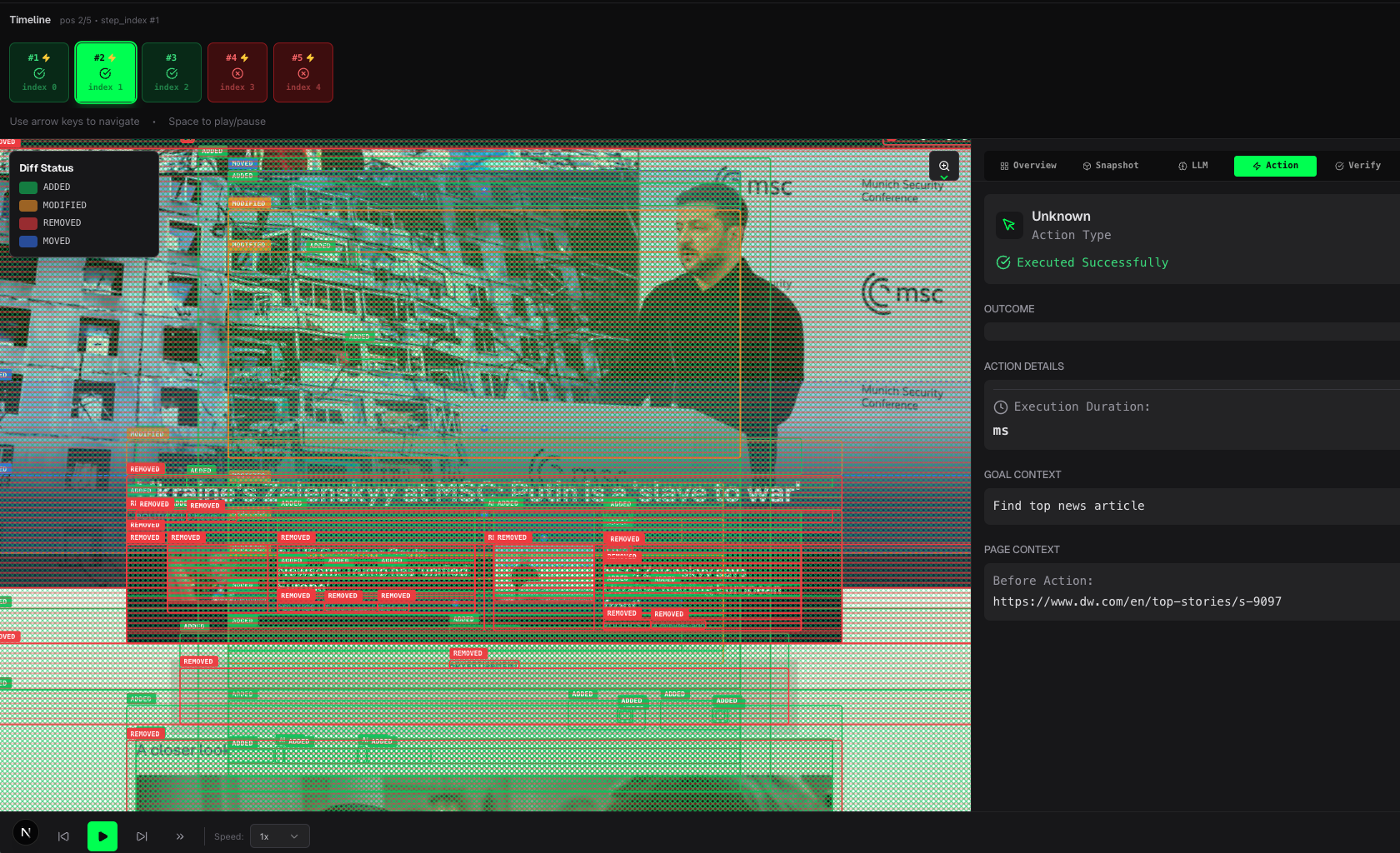

Predicate Studio: DOM state diff view showing BEFORE and AFTER an agent action

2. Mathematical Assertions (No LLM-as-Judge)

Instead of "LLM-as-judge," we use deterministic code-based assertions:

1// Deterministic verification - no LLM involved

2await runtime.verify({

3url_contains: "/checkout/success",

4element_exists: "[data-testid='confirmation']",

5dom_contains: "Order confirmed"

6}).eventually({ timeout: 5000 });These assertions provide mathematical certainty that the agent successfully completed the task:

- Does the URL now contain

/checkout/success? (url_contains) - Does the confirmation button now exist in the DOM? (

element_exists) - Does the local file now exist and contain data? (

fs.exists)

The .eventually() pattern handles SPA hydration without brittle sleep() calls.

The New Infrastructure Standard

As the agent economy grows, the definition of an "agent" will shift from a raw LLM to a complex system containing an LLM, a sandbox, a policy engine, and a verifier.

The Architecture Shift

LangChain and CrewAI are the brains. Predicate is the nervous system and the immune system.

If you are tired of building toys and are ready to deploy robust, SOC2-compliant, autonomous workflows to production, it is time to deploy Runtime Trust Infrastructure.

Get Started

Add runtime trust to your first agent in under 5 minutes. Available for Python and TypeScript.

Read the Quickstart